The sudden disappearance of digital connectivity in a hyper-connected society like Singapore does more than just pause social media feeds; it paralyzes the essential circulatory system of modern urban commerce and public safety. When Singtel, the nation’s largest telecommunications provider, experienced a massive eight-hour outage on March 16, the repercussions were felt immediately across every sector of the economy. From point-of-sale terminals failing in crowded shopping malls to ride-hailing drivers unable to locate their passengers, the disruption highlighted a profound vulnerability in the local digital infrastructure. While the company initially claimed that services had been fully restored by the evening, the arrival of a second wave of connectivity issues the following morning turned a manageable technical glitch into a full-blown public relations crisis. This sequence of events has not only frustrated millions of individual subscribers but has also prompted a rigorous evaluation of how critical infrastructure is maintained and defended in an era where downtime is increasingly viewed as an unacceptable institutional failure.

Discrepancies in Communication and the Erosion of Consumer Trust

The fallout from these consecutive disruptions was exacerbated by a significant disconnect between the official corporate narrative and the lived experience of the customer base. While Singtel’s technical teams categorized the secondary March 17 event as an isolated incident affecting only a “small number” of users, social media platforms and independent monitoring sites told a very different story. Thousands of reports flooded in from various districts, including many from users of GOMO, Singtel’s digital-leaning sub-brand, suggesting that the problem was far more systemic than the company was willing to admit. This perceived lack of transparency is often more damaging to a brand’s reputation than the technical failure itself, as it leaves consumers feeling ignored and misinformed. When users were advised to perform basic troubleshooting steps like toggling airplane mode or restarting their devices to no avail, the frustration peaked, leading to a visible surge in “subscriber churn” as customers began exploring more reliable alternatives.

Building on this atmosphere of dissatisfaction, the impact on consumer behavior has been swift and measurable, with many individuals opting to switch to competitors like StarHub or M1. This migration is not merely a reaction to a single day of poor service but rather a calculated response to a perceived loss of long-term reliability. In a market where high-speed internet and mobile data are treated as basic utilities, the inability to provide a stable connection during peak hours is seen as a breach of the fundamental service agreement. For many small business owners who rely on Singtel for their daily operations, the financial losses incurred during the eight-hour window were significant enough to justify the logistical headache of changing providers. This trend underscores a broader shift in the telecommunications landscape, where brand loyalty is increasingly fragile and secondary to the demand for uptime. Consequently, the company now faces the uphill task of rebuilding a relationship with a disillusioned public that is no longer satisfied with standard apologies.

Regulatory Pressure and the Search for Technical Accountability

The Infocomm Media Development Authority has signaled that it will not treat these incidents as routine operational hiccups, instead launching a formal investigation into the root causes of the failures. Preliminary findings from the regulator have helped to rule out the possibility of a coordinated cyberattack, which initially provided some relief regarding national security concerns. However, this shift in focus toward internal operational lapses has put even more pressure on the service provider to justify its maintenance and failover protocols. The regulator’s public commitment to taking “strong regulatory action” should negligence be identified serves as a stern warning to the entire industry that the government has a low tolerance for instability in its core communication networks. This investigation is expected to delve deep into the company’s internal logs and hardware configurations to determine why the primary systems failed and, perhaps more importantly, why the backup systems were unable to shoulder the redirected traffic load.

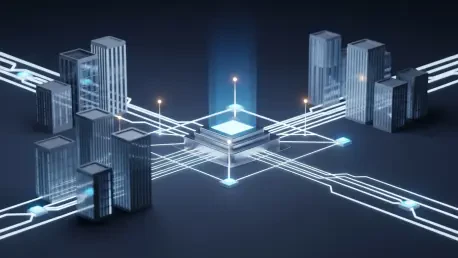

Technical analysts observing the situation suggest that the proximity of the two outages likely points to a phenomenon known as the “stress-test” effect, where the initial recovery process inadvertently exposes secondary vulnerabilities. Even if the two events were technically distinct—as the provider claims—the massive surge in data traffic and signaling requests during a service restoration can place immense strain on a network’s underlying architecture. This often leads to a “thundering herd” problem, where millions of devices attempting to reconnect simultaneously overwhelm the authentication servers and gateway controllers. This cascading effect suggests that while the individual hardware components may be robust, the sophisticated software orchestration required to manage modern 5G and fiber-optic networks may lack the necessary resiliency to handle large-scale recovery scenarios. Experts argue that until these recovery protocols are rigorously tested against such high-load conditions, the risk of a recurring “bounce-back” outage remains a persistent threat to network stability.

Strategic Enhancements for Long-Term Network Resiliency

The resolution of this crisis required more than just immediate technical patches; it demanded a fundamental shift in how the organization approached its infrastructure redundancy and disaster recovery frameworks. Moving forward, the implementation of more sophisticated AI-driven network monitoring tools has become a priority to detect early warning signs of hardware degradation before they escalate into total system failures. By utilizing predictive analytics, engineers can identify anomalous patterns in signaling traffic that often precede a major disruption, allowing for proactive intervention. Furthermore, the company has begun a comprehensive review of its internal communication silos to ensure that when a crisis occurs, accurate information is relayed to both the public and the regulators in real-time. This level of transparency is essential for managing public expectations and maintaining a degree of institutional credibility during periods of forced downtime, as it replaces vague assurances with concrete technical updates.

In the months following the disruptions, the focus shifted toward investing in decentralized network architectures that limit the “blast radius” of any single point of failure. By decoupling critical authentication services and distributed gateways, the provider aimed to ensure that a localized hardware fault in one data center could not trigger a nationwide blackout. This strategy also involves increasing the capacity of secondary backup systems to handle 100% of peak traffic loads, rather than the lower thresholds previously deemed sufficient for temporary emergencies. The government-led investigation concluded that while there was no evidence of intentional negligence, the existing protocols had become outdated in the face of modern data demands. Ultimately, the industry must recognize that as digital integration deepens, the requirements for network resilience will only become more stringent. Prioritizing robust failover mechanisms and transparent crisis management is no longer an optional business strategy but a core requirement for any telecommunications provider wishing to survive in an increasingly unforgiving and competitive market.