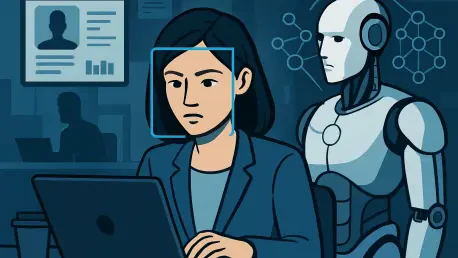

Every subtle movement of a computer mouse across a desk is no longer just a ghost in the machine but a valuable blueprint for the future of digital labor. Meta is currently transforming its corporate offices into a massive laboratory, where the daily activities of U.S.-based employees serve as the primary fuel for a new generation of autonomous software. By tracking every click and keystroke, the social media giant is effectively recording the “muscle memory” of the modern professional to create AI agents capable of mimicking human work patterns with startling precision.

This shift represents a fundamental reorganization of the workplace, moving away from a model where humans are the primary doers toward one where they function as high-level directors. The company’s internal strategy focuses on a transition where manual digital tasks—the tedious data entry, the complex scheduling, and the multi-app navigation—are offloaded to intelligent agents. As these tools become more sophisticated, the role of the employee evolves into one of oversight, ensuring that the AI correctly interprets the nuances of professional workflows while maintaining the quality of the final output.

The Shift from Workers to Directors in the Age of Agentic AI

While most employees view their daily clicks and keystrokes as mere routine, Meta is beginning to treat every mouse movement as a high-value training signal. The company is pivoting toward a future where human staff no longer execute manual digital labor but instead act as supervisors for highly capable AI agents. This transition marks a fundamental change in the corporate landscape, where the primary role of a professional shifts from “doing” to “directing” the digital workflow.

As this new paradigm takes hold, the definition of productivity is being rewritten to favor strategic decision-making over raw output. By delegating repetitive interface interactions to software, Meta aims to free its workforce to tackle creative problems that machines cannot yet solve. This evolution suggests that the value of a human worker in the coming years will not be measured by their speed at a keyboard, but by their ability to refine and guide the autonomous systems performing the bulk of the work.

Decoding the Model Capability Initiative: The Rise of Agentic AI

The shift toward “agentic” AI represents the next frontier beyond simple chatbots, focusing on software that can autonomously navigate complex interfaces. Meta’s introduction of the Model Capability Initiative (MCI) is a direct response to the limitations of current automation, which often struggles with nuanced human interactions like utilizing keyboard shortcuts or navigating intricate dropdown menus. By bridging the gap between static data and real-time human behavior, Meta aims to keep pace with competitors like OpenAI and Anthropic in the race to build truly autonomous workplace assistants.

Unlike previous iterations of AI that only responded to text prompts, MCI is designed to understand the physical and logical flow of a workday. The initiative seeks to capture the subtle “how-to” knowledge that is rarely documented in training manuals but is essential for getting things done. By observing how experienced staff manage technical friction, the AI learns to anticipate obstacles and execute multi-step processes across various software ecosystems without constant human intervention.

Granular Monitoring: Fueling the Next Generation of Workplace Automation

Meta’s MCI tool functions by capturing a comprehensive “data exhaust” from U.S.-based employees, turning their workdays into a massive training set. This involves logging granular interactions, including keystrokes and periodic screen snapshots, to teach AI models how to replicate professional workflows. Under the broader “Agent Transformation Accelerator” strategy championed by CTO Andrew Bosworth, these data points are used to train agents to handle technical hurdles that previously required a human touch.

The objective is to create a seamless replication of digital labor, allowing AI to execute tasks that once demanded hours of manual input. This massive collection effort turns every application used by an employee into a classroom for the model. Consequently, the software begins to master not just the data within the apps, but the very way those apps are manipulated to achieve specific business goals, effectively turning human expertise into a scalable digital asset.

The High Cost of Training: Privacy Risks and the Observer Effect

Industry analysts and security experts warn that harvesting such intimate data creates a minefield of privacy and compliance challenges. By acting as a “data exhaust pipeline,” these systems risk ingesting sensitive intellectual property, corporate secrets, or even employee credentials, making the training sets a high-value target for cyberattacks. Furthermore, the “observer effect” suggests that employees may inadvertently degrade the quality of training data by altering their behavior when they know they are being monitored.

This creates a complex tension between the need for high-quality training inputs and the fundamental right to privacy and trust within the modern workforce. If workers feel every pause or error is being scrutinized by an algorithm, the resulting data may reflect a performance rather than actual work habits. Such a dynamic could lead to “garbage in, garbage out” scenarios, where the AI learns sanitized or inefficient versions of reality, ultimately undermining the efficiency it was meant to provide.

Navigating the Ethics of Workplace Data Harvesting

To manage the transition toward AI-driven workflows, organizations must establish clear boundaries between training and surveillance. Implementing robust data governance frameworks was essential to ensure that keystroke and screen data were used exclusively for model improvement rather than performance evaluations. Companies prioritized transparency, providing employees with clear disclosures on what was being tracked and how it impacted their roles.

Moving forward, the focus should shift toward decentralizing data collection and integrating local processing to minimize security vulnerabilities. Organizations that succeed in this transition will likely be those that adopt “privacy-by-design” principles, ensuring that the agents learned from collective patterns rather than individual identities. By fostering a culture of mutual benefit where AI reduced the burden of labor rather than increasing the pressure of oversight, enterprises navigated the complex balance of innovation and worker autonomy.