Nia Christair stands at the forefront of the mobile and enterprise technology landscape, bringing a wealth of experience that spans the intricate world of mobile gaming, hardware design, and large-scale digital solutions. As organizations grapple with the transition from simple automation to complex, autonomous digital workers, her insights into how these technologies interface with human workflows have become essential. In this discussion, we explore the explosive growth of agentic AI, the shifting boundaries of security and governance, and the technical architecture required to support a future where digital agents are as ubiquitous as the employees they assist. We delve into the practicalities of scaling infrastructure to support thousands of agents, the dangers of shadow AI in a decentralized environment, and the necessity of redesigning business processes from the ground up to truly leverage machine intelligence.

Projections suggest enterprises might scale from a dozen agents to over 100,000 within three years. How can IT departments realistically manage this explosion in digital workers, and what specific metrics should they use to justify the deployment costs?

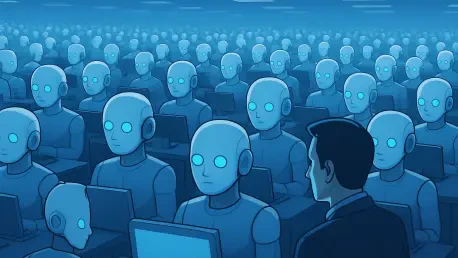

The jump from managing a modest 15 agents in 2025 to a staggering 150,000 by 2028 represents a seismic shift that will test the very foundations of modern IT infrastructure. To manage this explosion, departments must move away from manual oversight and toward automated orchestration platforms that treat agents with the same rigor as hardware assets. The first step involves identifying high-impact domains like data analytics and customer service, where we have the highest confidence that these digital workers can add immediate value. From there, IT teams must implement tiered scaling, starting with agents that handle basic document summarization before moving to those that can manipulate spreadsheets and automate multi-step workflows. Success shouldn’t just be measured by the number of agents deployed, but by the reduction in process cycle times and the accuracy of delegated tasks, ensuring the investment translates into tangible productivity rather than just digital noise.

As agents transition from simple chatbots to tools capable of autonomous delegation, where should the human-in-the-loop boundary be drawn for security?

While the allure of total autonomy is strong, we are likely at least two years away from seeing truly independent agents operate without significant human oversight. The boundary must be drawn at the point of high-stakes decision-making, particularly in highly regulated sectors like finance and healthcare where a single hallucination can lead to catastrophic errors. Governance controls should function like a digital leash, where semi-autonomous agents handle the “grunt work” of document processing but require a human “handshake” before executing external transactions or final approvals. I remember a case in a regulated field where a system was given too much leeway; the resulting errors in data interpretation created a ripple effect that took weeks to untangle, proving that without guardrails, speed becomes a liability. We must prioritize reducing errors and hallucinations over pure speed to ensure these tools remain assets rather than vulnerabilities.

High-scale agentic AI requires 100% uptime, yet major model providers occasionally face outages. How should organizations architect their hardware and model resources to ensure redundancy, and what are the practical implications of spreading agents across multiple platforms?

Treating an AI agent with the same criticality as a core server means that 100% uptime is no longer a luxury—it is a requirement for business continuity. When major players like OpenAI or Anthropic experience service interruptions, an enterprise relying on a single model will find its digital workforce paralyzed, leading to a silence in the corridors of productivity that is both eerie and expensive. To prevent this, organizations must architect a multi-model redundancy strategy, spreading their agent workloads across different providers and localized hardware resources. This configuration allows for a “failover” mechanism where, if one model goes dark, the agent’s logic can be rerouted to an alternative resource, even if it means operating at a slightly lower performance tier temporarily. The practical implication is a more complex management layer, but it is the only way to safeguard against the unpredictability of the current AI provider landscape.

Restrictive policies often lead employees to use unsanctioned “shadow AI” tools. What strategies can leaders use to proactively sanction agent use while preventing “agent sprawl,” and how can they maintain visibility over thousands of decentralized digital assistants?

The greatest risk to an organization isn’t the presence of AI agents, but the presence of agents that the IT department doesn’t know exist. When leadership imposes overly restrictive bans, employees inevitably seek out “shadow AI” to keep up with their workloads, creating massive gaps in security and data privacy. A more effective strategy is to proactively sanction specific, vetted tools and provide a clear framework for how new agents can be onboarded into the corporate ecosystem. Maintaining visibility over 150,000 agents requires a centralized dashboard that tracks agent activity, permissions, and data access in real-time to prevent “agent sprawl” from turning into a chaotic digital jungle. By creating a transparent environment where agents are registered and monitored, leaders can foster innovation while ensuring that every digital assistant remains under the company’s governance umbrella.

Simply layering AI over legacy business processes often leads to failure. When redesigning a workflow specifically for agentic AI, what elements of a traditional process should be discarded, and how can teams safely test these new designs?

One of the most common mistakes I see is the attempt to “slap an agent on top” of a process that was originally designed for human limitations and manual document routing. To truly succeed, we must discard the linear, step-by-step handoffs of the past and move toward “agent-first” engineering, where the agent serves as a central hub for collaboration and data manipulation. This means removing redundant approval layers that were only there because humans are prone to fatigue, and instead replacing them with automated validation scripts. Teams should test these new designs in “sandbox” environments where agents can fail safely, allowing developers to understand where the tool helps and where it falters without risking live operations. It is a process of trial and error that requires a cultural shift to accept that some tools will fail, and that these failures are necessary stepping stones to building a more resilient, automated enterprise.

What is your forecast for agentic AI?

My forecast is that by 2028, the distinction between “software” and “digital worker” will essentially vanish as agentic AI moves into the mainstream of every Fortune 500 company. We are moving away from the era of the “text box” and into an era of autonomous co-working tools that are deeply integrated into platforms like Microsoft 365 and Google Workspace. While the road will be paved with high failure rates—some projects hitting as high as 95%—the successful deployments in customer service and analytics will set a blueprint for the rest of the industry. Ultimately, those who embrace proactive governance and redesign their processes today will find themselves leading a workforce where 150,000 agents operate with the precision and reliability of the best human teams.