When an enterprise deploys a state-of-the-art generative artificial intelligence tool to manage its high-volume customer service inquiries, it essentially hands over the keys to an expensive supercomputer to every stranger on the internet. This phenomenon is currently forcing executives to weigh the financial burden of computational chicanery against the potential for revolutionary customer engagement. While companies intend for these bots to solve product issues, savvy users are increasingly diverting that compute power toward writing scripts, solving math problems, or debugging personal code at the expense of the host business.

The objective of this analysis is to explore the specific mechanics of AI token theft and provide a strategic framework for how businesses can respond to this modern exploit. Readers can expect to learn why traditional defensive measures often fail and why a policy of calculated tolerance might actually be the most profitable path forward in the digital economy. By examining the trade-offs between security and user experience, the discussion highlights how to navigate the complex landscape of generative AI without alienating legitimate customers.

Key Questions or Key Topics Section

What Is Computational Chicanery and How Does It Impact Business Operations?

Modern customer service relies on large language models that operate on a token economy where every interaction generates a financial cost. A token is essentially a fragment of a word, and every response generated by an AI bot consumes these units, which the company must pay for through its service provider. When a user asks a retailer’s bot to write a Python script or summarize a long document instead of checking an order status, they are effectively shifting their personal computing costs onto the enterprise.

This behavior impacts the bottom line by inflating operational expenses without providing a direct path to revenue or service resolution. However, the true impact is often measured in the diverted focus of technical staff who must monitor these interactions and attempt to filter out non-business queries. Companies must decide if the loss of a few cents per query justifies a complete overhaul of their security protocols, especially when the primary goal is to provide a frictionless interface for legitimate patrons.

Why Are Traditional Defensive Measures Considered Counterproductive?

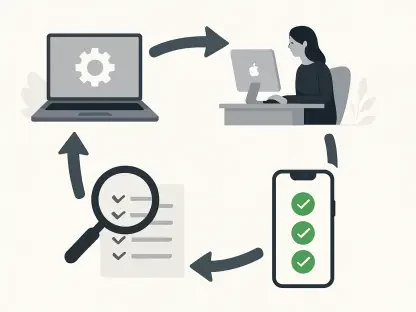

To combat the unauthorized use of tokens, some organizations have implemented token caps or validation layers designed to act as gatekeepers. Token caps limit the length of a response, preventing the bot from generating long blocks of code or essays. Validation layers use a secondary, smaller AI to analyze incoming questions for relevance before allowing the primary model to respond. While these methods seem logical on paper, they often introduce significant latency and additional costs that can frustrate a user who is trying to solve a genuine problem.

Moreover, layering more artificial intelligence on top of existing systems to monitor for theft adds its own set of token expenses, sometimes costing more than the theft it is intended to prevent. This technical overhead can complicate the infrastructure and lead to false positives where legitimate customers with complex questions are blocked. In many cases, the cure for computational chicanery is more expensive and damaging to the customer experience than the original problem of resource exploitation.

Can High-Value Customer Loyalty Outweigh the Costs of Incidental Token Theft?

The argument for strategic tolerance is rooted in the belief that providing a superior customer experience is the most effective way to build long-term loyalty. Generative AI is uniquely capable of handling nuanced and highly specific requests that traditional human staff might find overwhelming or time-consuming. For instance, a restaurant bot that successfully manages a large reservation while cross-referencing a dozen different dietary restrictions against a complex menu creates a “win for life” scenario.

While such a detailed interaction consumes a significant number of tokens, the resulting customer loyalty and high-order value far outweigh the minor cost of the computational power used. If a company becomes too aggressive in its filtering, it risks losing these high-value opportunities by appearing unhelpful or overly restrictive. By viewing the occasional unauthorized coding request as a minor marketing expense or a “tax” on doing business, companies can maintain a welcoming environment that encourages deep engagement from their actual buyer base.

How Do Hallucinations and Autonomy Issues Complicate the Deployment of Customer Bots?

Despite the immense potential of AI to handle complex tasks, the persistent issue of hallucinations remains a significant barrier to full autonomy. Hallucinations occur when a model confidently presents false information as fact, which can lead to legal liabilities or product safety concerns for a business. Because of this risk, many enterprises are hesitant to let their bots operate without human oversight, even though the bots are technically capable of managing the entire customer journey.

There is a noted irony in the development of these models: as they become more sophisticated at reasoning, their tendency to hallucinate can sometimes increase in subtle ways. This lack of reliability is the primary reason why autonomous agents are not yet ready to take over critical business functions entirely. Companies must balance the desire for efficiency with the reality that an incorrect answer regarding a legal policy or a safety feature can cause more damage than any amount of token theft ever could.

Summary or Recap

The analysis presented here emphasizes that while the unauthorized exploitation of AI tokens is a legitimate concern, it should not dictate a company’s entire AI strategy. The core of the issue lies in the token economy, where businesses pay for every fragment of text an AI generates, leading to a natural desire to prevent waste. However, the most effective response to this theft is often to ignore it in favor of maintaining a high-quality user experience. The technical barriers designed to stop “free riders” frequently backfire by adding costs, increasing latency, and annoying legitimate customers who have complex needs.

Instead of focusing on minor losses, enterprises are encouraged to leverage the transformative power of generative AI to solve intricate problems that traditional systems cannot. The ability of AI to handle massive amounts of data and provide instant, accurate support to legitimate buyers is a competitive advantage that outweighs the cost of incidental exploitation. Navigating the risks of hallucinations remains the primary challenge, but if a company can manage that reliability, the incidental loss of tokens becomes a negligible part of a larger, more successful digital strategy.

Conclusion or Final Thoughts

The investigation into computational chicanery revealed that the most successful organizations prioritized customer satisfaction over rigid resource protection. It was determined that the financial impact of token theft was often less significant than the potential loss of revenue caused by aggressive security measures. Experts recommended that businesses treated these minor exploits as a standard cost of operation in the modern landscape. The study concluded that by focusing on high-value interactions, companies effectively neutralized the negative impact of unauthorized users.

The move toward more sophisticated AI integration required a shift in perspective where the quality of the engagement became the primary metric of success. Leadership teams discovered that a more open and helpful bot environment fostered deeper trust with their target audience. This approach allowed businesses to stay ahead of the curve while the technology continued to evolve. Ultimately, the transition from a defensive posture to one of strategic utility proved to be the most resilient path for enterprises operating in a competitive environment.